Many AI pricing strategies fail because teams select a model without first understanding its costs. This guide explains how to develop pricing that protects margins, scales with customers, and adapts to changes in inference costs.

Most software companies now offer AI features, yet many repeat the same pricing mistakes. Relying on intuition, competitor benchmarks, or temporary assumptions—called margin-blind pricing—worked with negligible SaaS marginal costs but fails in AI, where inference costs fluctuate significantly.

Scale is significant. AI-native application spending increased 108% year-over-year, with organizations now averaging $1.2 million annually on these tools (Zylo 2025). Global enterprise AI spending is expected to surpass $250 billion in 2026 (Gartner). While revenue is rising quickly, underlying costs are also increasing.

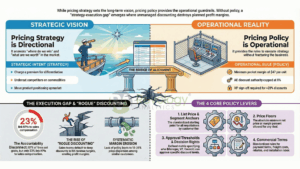

Effective pricing requires discipline. First, map your cost structure before selecting a model. Align your price metric with factors customers can predict and control. Establish a governance process that reviews pricing as frequently as inference costs change. This guide covers the models, metrics, packaging decisions, and implementation steps that distinguish sound AI pricing from margin-blind guesswork.

Key Insight: LLM inference costs have declined roughly 10× annually since 2022, with GPT-4-equivalent models now available at ~$0.40 per million tokens versus $20 three years ago. The pricing set today will be misaligned within 12 months unless your governance process accounts for this deflationary rate.

What “AI Software Pricing” Means (and What It Doesn’t)

A product manager integrating an AI assistant into a SaaS platform faces different economics than a founder developing an AI-native product or an enterprise buyer assessing platform-level infrastructure costs. Treating these cases as a single pricing discussion exemplifies margin-blind pricing. AI feature pricing covers adding AI to an existing product and deciding whether to absorb or separately meter costs; defaulting to absorption without modeling costs is, by definition, margin-blind. AI platform pricing addresses infrastructure and tends toward pure consumption models.

AI software pricing typically consists of four layers: a platform fee (covering access, core features, and support), a model cost (inference, fine-tuning, embedding generation), a usage charge (API calls, tokens processed, documents analyzed), and services (such as implementation, custom model training, and managed operations). Deciding which layers to expose to customers defines your pricing architecture. Distinguishing these components is essential, as the most common mistake is failing to understand the cost structure sufficiently to select an appropriate model.

Why AI Pricing Is Different from Traditional SaaS

Traditional SaaS has near-zero marginal cost per additional user, whereas AI products incur ongoing costs. This fundamental difference affects every pricing decision and explains why applying the SaaS playbook without adjustment often results in margin-blind pricing.

Inference costs bring variable cost of goods sold that scales with usage, sometimes unpredictably; for example, a customer submitting 50,000 complex prompts costs more than one submitting 2,000. LLM inference costs have dropped about 10× annually since 2022, with GPT-4-equivalent models now $0.40 per million tokens vs $20 three years ago (a16z 2025). This ongoing deflation benefits margins, but the pricing set today will misalign within 12 months without regular review.

AI value is often probabilistic; for example, an AI assistant may save three hours per week for a frequent user but provide no benefit to someone who does not use it. This uncertainty complicates willingness-to-pay research and makes adoption patterns less predictable. Additionally, enterprise buyers increasingly treat AI purchases as a separate procurement category, requiring data-handling policies, model-provenance documentation, and audit rights.

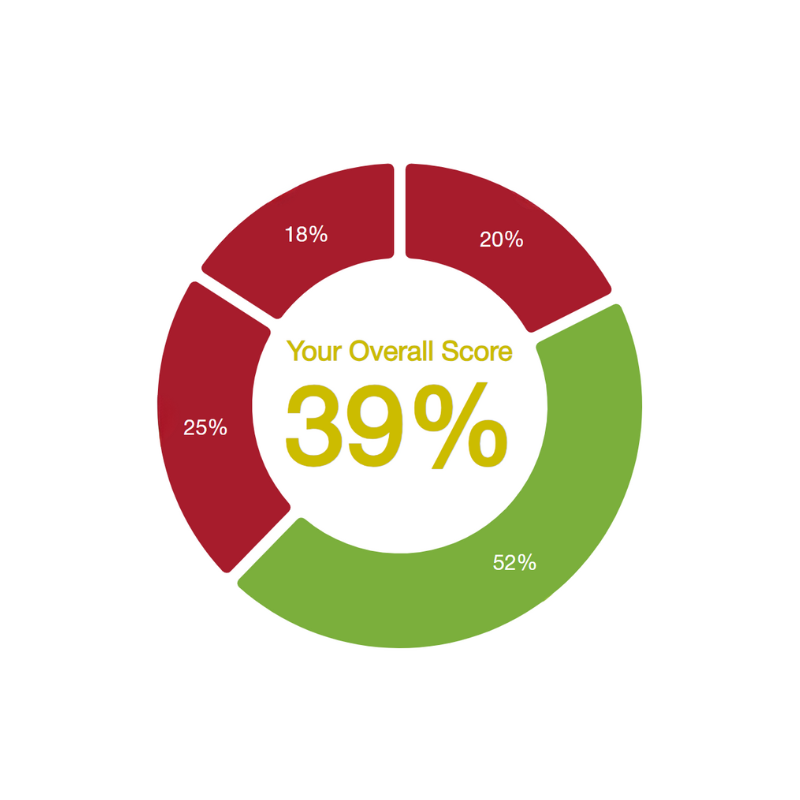

The Main AI Software Pricing Models

Four primary pricing models have emerged, each appropriate for specific scenarios. Selecting a model without analyzing its cost implications is simply another form of margin-blind pricing.

The Main AI Software Pricing Models: Selecting from the four primary AI pricing models without first analyzing their underlying cost implications is a risky form of margin-blind pricing

Seat-based or tiered SaaS bundles are effective when AI costs per user are predictable and relatively low. Microsoft’s Copilot ($30/user/month) uses this model. This approach is suitable when per-user inference costs remain below 15–20% of the subscription price, and customers prioritize budget predictability.

Usage-based pricing (tokens, API calls, minutes, documents) charges customers based on actual usage. Eighty-five percent of companies have adopted some form of usage-based pricing, with credit-based models increasing 126% year-over-year (Metronome 2025). This model is best when usage varies significantly across customers, and the cost of goods sold scales directly with consumption.

Credit-based and prepaid consumption models consolidate multiple AI capabilities into a single currency. Customers purchase credit blocks and use them across various features at different rates. This approach is effective when offering multiple AI capabilities with varying cost profiles and when customers seek both budget certainty and flexibility.

Outcome-, value-, and performance-based pricing models charge based on measurable results, such as revenue generated, documents processed accurately, or tickets resolved. For example, one enterprise software firm transitioned from a flat-fee to a hybrid platform-plus-usage model and achieved a 30% increase in recurring revenue and a 25% improvement in customer satisfaction.

In practice, most successful AI products use a hybrid approach: a base platform fee covering core access plus a metered component for AI-intensive operations. This structure protects floor revenue while allowing expansion through usage.

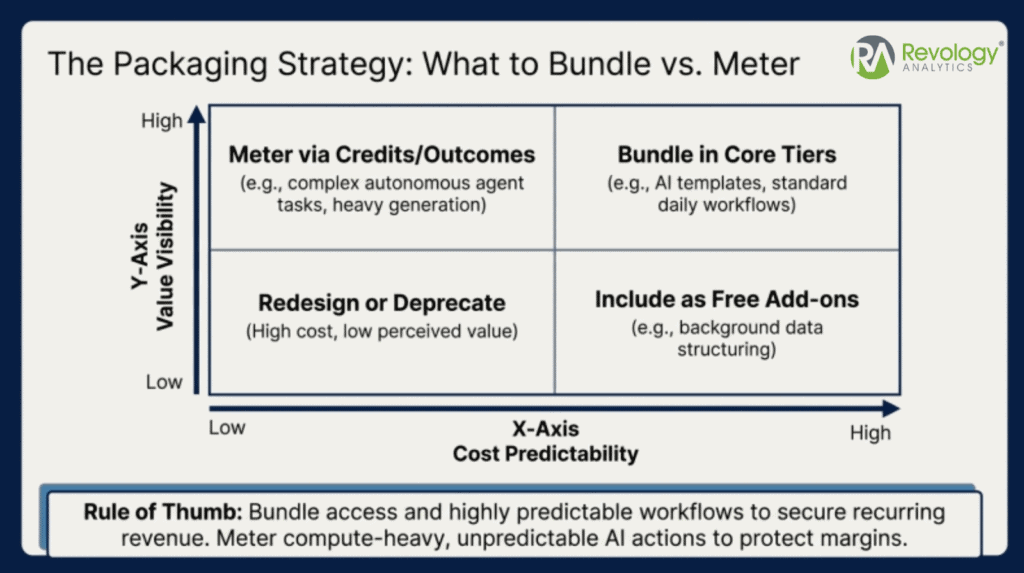

Packaging Strategy: What to Bundle vs. Meter

Packaging defines what customers receive at each price point and is a common source of costly margin-blind pricing. Poorly structured packages can cause margin erosion that cannot be corrected by price increases alone.

Packaging Strategy (What to Bundle vs. Meter): A sustainable packaging strategy includes baseline value expectations in the platform fee while metering the AI capabilities that drive variable costs.

Include all baseline value expectations in the platform fee. Meter capabilities that drive variable costs and scale with delivered value. For SaaS pricing optimization, distinguishing between essential and premium features is fundamental.

A Good/Better/Best architecture is effective for most AI products. The Good tier offers self-serve access with usage caps; Better adds team collaboration, higher caps, and priority processing; Best provides enterprise terms, custom model access, and dedicated support. A mid-size SaaS company we advised restructured its packaging using this approach and achieved a 40% revenue increase over two years, primarily due to improved packaging clarity.

Feature gating restricts capabilities by tier, while capacity gating offers the same features but limits usage volume. AI products often prefer capacity gating, as higher volumes increase costs. Add-ons such as SOC 2 compliance, private model deployment, and custom integrations allow you to maintain competitive base pricing while capturing additional value from enterprise requirements. The auto service case study demonstrates how segmented packaging can be applied beyond software.

Step-by-Step Framework to Set AI Software Pricing

A structured approach helps prevent common errors. Using a pricing tool before establishing a clear strategy is a frequent form of margin-blind pricing, as it appears rigorous but overlooks essential cost and value analysis.

Step 1: Define ICP, Jobs-to-Be-Done, and Value Metrics. Document your ideal customer profile and the specific jobs your AI performs. Identify the value metric — the unit of output your customer naturally associates with value. For an AI writing assistant, that might be documents produced; for an AI code reviewer, pull requests analyzed.

Step 2: Map Cost Drivers and Constraints. Build a bottom-up cost model: model/provider rates, infrastructure costs, support load per segment, and human-in-the-loop costs. Understand your marginal cost per unit of customer value at different usage levels. Teams that skip this step cannot distinguish between a 70% gross-margin customer and a 15% one.

Step 3: Choose Price Metric(s) and Packaging Architecture. Align your price metric with both the customer’s value perception and your cost structure. The metric should be something the customer can predict, monitor, and control.

Step 4: Set Price Levels, Fences, and Overage Rules. Use willingness-to-pay research (Van Westendorp or Gabor-Granger) to establish price ranges. Define overage policies that protect margins — hard caps, soft caps with alerting, or graduated overage rates. A compliance SaaS provider we worked with restructured its tiered pricing, implemented automated overage guardrails, and achieved a 20% increase in ARR within 3 months.

Data Inputs You Need Before You Price

Four data inputs are required for deliberate pricing: usage telemetry (actual consumption patterns such as tokens processed, API calls per user, and concurrency peaks), cost modeling (provider rates, infrastructure costs at scale, and support costs by segment), customer value signals (measurable outcomes like time saved, error rates reduced, or revenue attributed to AI-assisted decisions), and market inputs (competitive pricing, win/loss data, and formal willingness-to-pay research). Competitive analysis offers external benchmarks, while internal data establishes your margin floor. Omitting any of these leads back to margin-blind pricing.

Practitioner Note: Most teams underinvest in usage telemetry during early development, resulting in inadequate pricing data at launch. Tracking AI feature usage from the outset is the highest-return pricing investment for AI products.

Core KPIs to Manage AI Pricing and Profitability

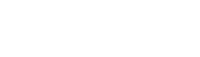

Four key metrics indicate whether your pricing is effective or if margin-blind pricing is undermining your financial performance.

Core KPIs to Manage AI Pricing and Profitability: Tracking four key metrics—like gross margin by workload and unit economics—will reveal if your pricing is effective or if you are suffering from margin-blind pricing.

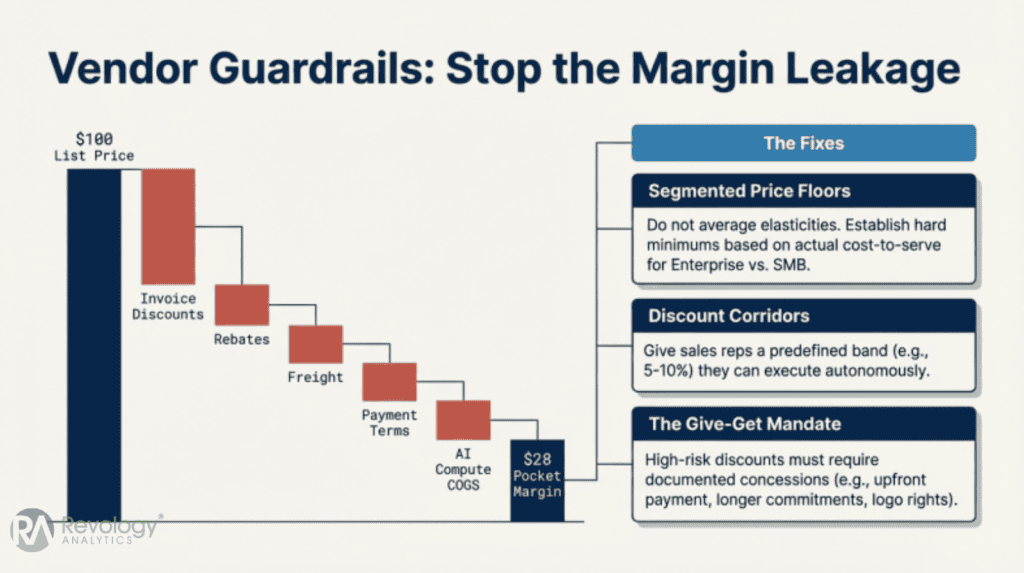

Gross margin by customer and workload: Track margins at the workload level, not just in aggregate. Aim for 70–80% for standard SaaS workloads and 50–65% for AI-intensive operations. Margin waterfall analysis helps identify where leakage occurs. Our engagements show 15–25% gross profit improvements when teams shift from aggregate to workload-level margin tracking.

Net revenue retention and expansion through usage: Leading usage-based companies achieve NRR above 130%, compared to a SaaS median of 106% and an enterprise average of 118% (KeyBanc 2025). Track NRR by cohort to determine if usage growth leads to revenue growth.

Unit economics: Measure contribution margin per 1,000 tokens or per workflow. This metric shows whether pricing covers variable costs at the unit level and identifies the volume required for customer profitability. A negative contribution margin at any usage tier indicates a structural issue that increased volume cannot resolve.

Price realization: Monitor discounting, leakage, and overage capture. The ratio of collected revenue to list price reveals the gap between strategy and execution. For example, a 5% leakage rate on a $10M ARR product results in $500,000 in lost revenue. Effective pricing and revenue management require closing this gap.

Designing Usage Metrics That Customers Accept

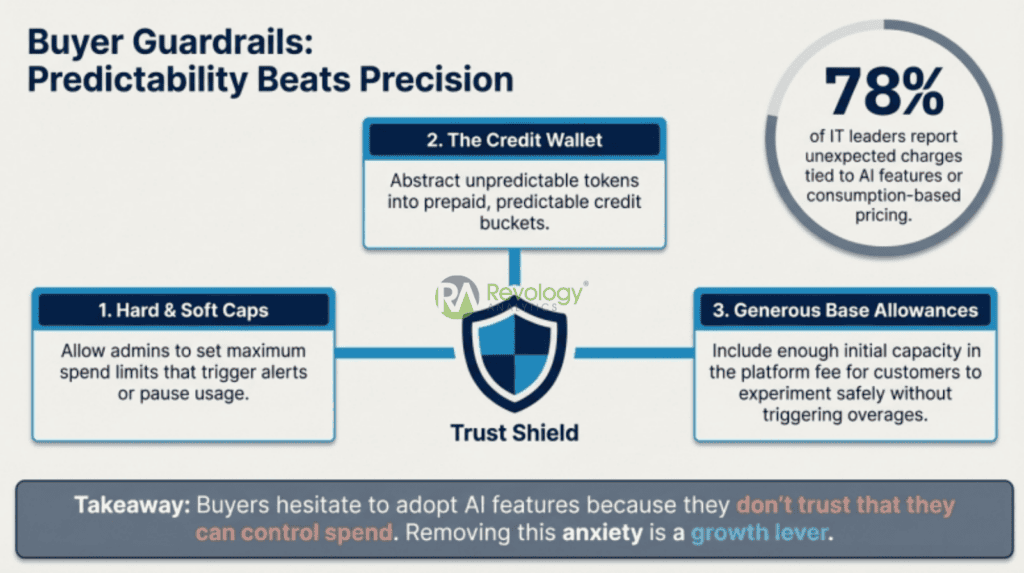

Sixty-five percent of IT leaders report unexpected charges from consumption-based AI pricing, with costs exceeding initial estimates by 30–50% (Flexera 2025). Bill shock is the most visible symptom of margin-blind pricing.

Designing Usage Metrics That Customers Accept: To prevent bill shock, you must choose usage metrics that customers can easily estimate before purchase, monitor during use, and actively control.

The effective usage metric meets three criteria: customers can estimate it before purchase, monitor it during use, and take action to control it. ‘Tokens processed’ does not meet these criteria for most business users. Metrics such as ‘documents analyzed’ or ‘reports generated’ are preferable, as they align with activities customers already track. that protect both margins and budgets: rate limits, fair-use policies, and anomaly detection that flags unusual spikes before they become billing surprises. Proactive usage communication — real-time dashboards, threshold alerts at 50%, 75%, and 90% of allocation, and optional budget caps — reduces bill shock and builds trust.

Industry Micro-Scenarios

The pricing dynamics described above vary by sector. The following three examples illustrate how the same framework can be adapted to different industries. Healthcare AI (clinical decision support). A diagnostic imaging company prices its AI triage tool per study analyzed, with a minimum monthly commitment baked into the radiology group’s contract. The outcome metric (studies triaged) directly tracks the radiologist’s workload, making it easy for buyers to predict costs. Regulatory requirements add a compliance add-on tier — HIPAA audit logging and model explainability documentation — that lifts average deal size 15–20% above the base platform fee.

Industrial / manufacturing AI (predictive maintenance). A predictive-maintenance vendor charges a monthly price per connected asset, with overages when anomaly-detection inference exceeds the included allocation. Plant operators accept this metric because they already budget per machine. The nuance: seasonal production ramps can triple inference volume for 8–12 weeks, so the vendor offers a prepaid “surge credit pack” to smooth billing and prevent churn during peak production.

Financial services AI (document extraction and compliance). A regtech firm prices its AI contract-review tool per document processed, with a Good/Better/Best tier structure gated by document complexity (standard contracts vs. bespoke agreements vs. multi-jurisdictional instruments). Per-document pricing aligns with the compliance team’s existing cost-per-review budgeting. The firm embeds an outcome guarantee — 95% extraction accuracy SLA — that justifies a 2× premium over competitors who price on tokens alone.

Common Pitfalls (and How to Avoid Them)

Even well-designed pricing architectures can deteriorate without operational discipline. The following pitfalls represent specific forms of margin-blind pricing.

Pricing based on a metric that does not reflect value: If your price metric (such as tokens) does not correlate with customer value (such as decisions improved), you will encounter ongoing resistance. Survey customers to identify the unit of work that best represents the value your AI delivers and compare it to your current metering approach.

Underestimating long-tail usage: A small percentage of customers often generate disproportionately high inference costs. The top decile of customers by AI cost-to-revenue ratio typically consumes 8–12 times the median. If your pricing does not account for this distribution, your aggregate margin calculations are inaccurate.

Confusing packaging with pricing: Limit your offering to three tiers and two to three clear add-ons. Exceeding this level of complexity can lengthen sales cycles and prompt customers to choose the lowest-priced option.

Misaligned sales compensation: If compensation rewards bookings without considering usage-based margin, over-discounting is inevitable. Align compensation with gross or contribution margin, not just top-line ARR. Maintain ownership of your pricing analytics to address this gap.

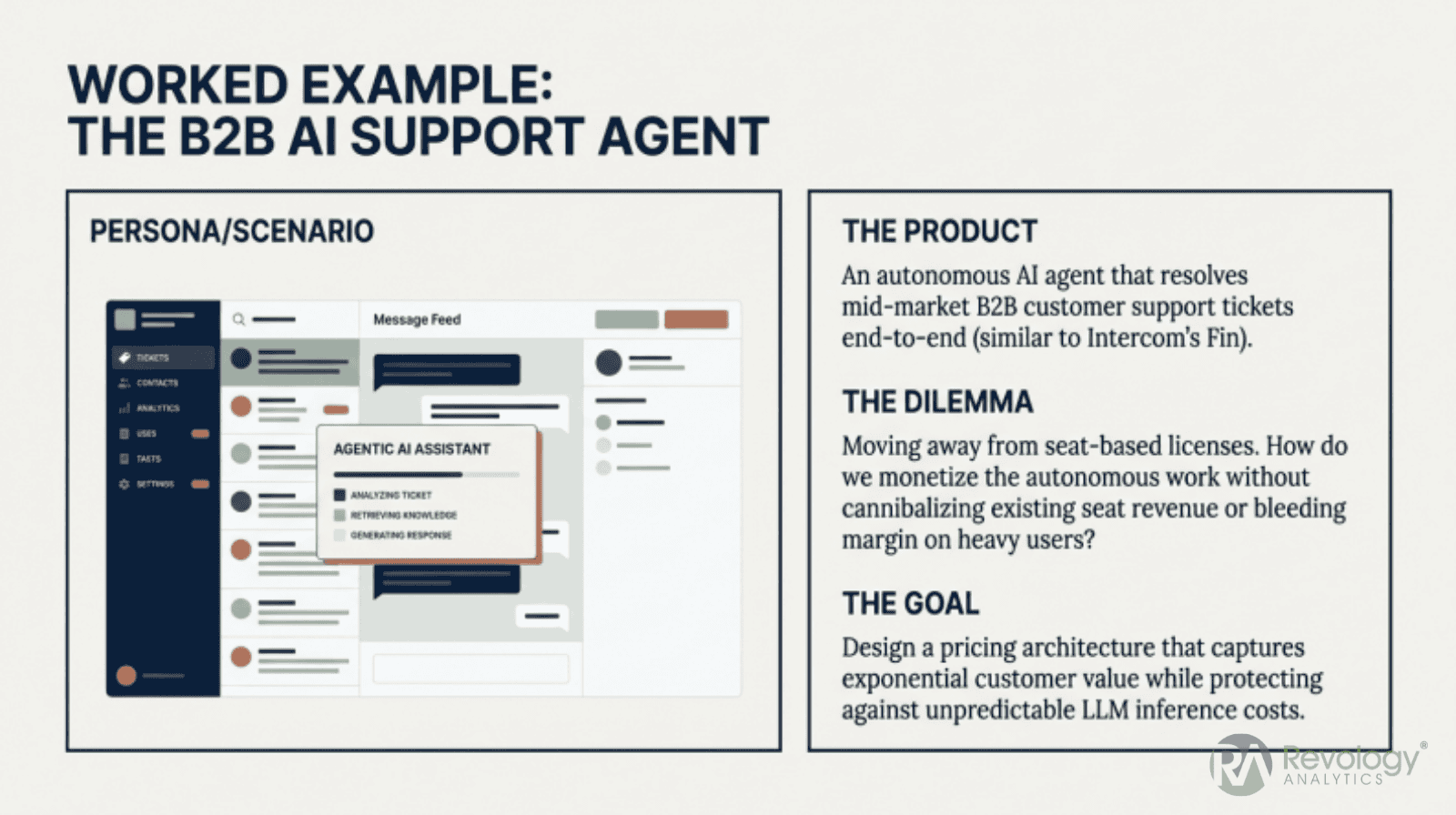

Worked Example: Pricing an AI Assistant Feature for a B2B SaaS

Consider a mid-market B2B SaaS product ($50K average ACV) adding an AI assistant that summarizes documents, drafts responses, and answers questions about the customer’s data.

Worked Example (Pricing an AI Assistant): A side-by-side comparison reveals that a hybrid pricing model delivers a stable 50% gross margin with predictable costs, whereas a pure seat-bundle approach is structurally unprofitable.

Step 1: Establish Your Cost Baseline

Average usage per seat: 500 queries/month. Cost per query (inference + infrastructure): $0.015. Average 20 seats per customer. Support load increase: ~$20/month per customer. Monthly AI cost per customer: 20 × 500 × $0.015 = $150 inference + $20 support = $170/month ($2,040/year, or 4.1% of ACV).

Step 2: Model Three Pricing Options Against Break-Even

Option A — Seat bundle: $8/seat/month added. Revenue: $160/month. Margin after AI COGS: –$10/month (negative 6%). Structurally unprofitable at current usage.

Option B — Credit system: $0.05/query (3.3× markup). Revenue at average usage: $500/month. Margin: $330/month (66%). Risk: bill shock — 65% of IT leaders report unexpected AI charges (Flexera 2025).

Option C — Hybrid: Include 200 queries/seat/month in the platform, charge $0.04/query for overages. Revenue at average usage: $100 base + $240 overage = $340/month. Margin: $170/month (50%). Break-even: positive even at zero overage revenue.

Step 3: Run Margin Sensitivity on the Hybrid Model

| Scenario | Revenue | Cost | Margin |

| Usage doubles (1,000 queries/seat) | $740/mo | $320/mo | 57% |

| Inference costs drop 50% | $340/mo | $95/mo | 72% |

| Only 40% of users adopt | $100/mo | $80/mo | 20% |

Step 4: Compare and Decide

| Metric | Option A (Seat) | Option B (Credits) | Option C (Hybrid) |

| Monthly revenue | $160 | $500 | $340 |

| Gross margin | –6% | 66% | 50% |

| Break-even usage | Below current | 34% of average | Positive at zero overage |

| Bill shock risk | None | High | Low |

The hybrid model delivers a 50% gross margin while maintaining predictable base costs for customers. In contrast, the pure seat-bundle approach is structurally unprofitable and exemplifies margin-blind pricing within a traditional SaaS model.

Step 5: Define the Launch Offer and Overage Guardrails

Launch with Option C: include 200 queries per seat per month, with a $0.04 per query overage. Set automated alerts at 80% and 95% of the included allocation. Offer a ‘usage insurance’ add-on at $3 per seat per month to increase the included allocation to 350 queries. Monitor actual usage distribution for 90 days and adjust based on observed P25, P50, and P90 usage bands.

What to Communicate Internally and Externally

Internal (product, finance, sales, customer success): Share the cost baseline, three-option analysis, and margin sensitivity table. Sales require break-even calculations to support the hybrid model over seat-bundle requests. Finance needs scenario analysis for realistic margin targets. Customer success needs overage alert thresholds to proactively manage customer communications.

External (customers and prospects): Emphasize value and predictability rather than cost structure. Present the included allocation as generous headroom and position overages as ‘pay only if you receive additional value.’ Provide a usage calculator to help buyers estimate monthly costs before signing. Avoid highlighting per-query costs; instead, describe overages as ‘additional capacity at $X per block of 100 queries.’ Clearly feature the 80% and 95% alert thresholds in sales materials to demonstrate budget protection.

Implementation Roadmap (0–90 Days)

Implementation Roadmap (0–90 Days): Following a disciplined 90-day rollout—starting with usage tracking and ending with a monthly pricing governance process—can ultimately increase gross profit by 15-25%.

Weeks 0–2: Implement usage tracking for AI features. Develop a per-customer cost model. Identify the top and bottom deciles of customers by AI cost-to-revenue ratio.

Weeks 3–6: Design two to three packaging options. Conduct lightweight willingness-to-pay research through 15–20 customer interviews. Update terms of service for usage-based components. Test each option against break-even analysis.

Weeks 7–10: Configure billing for metered components. Build customer-facing usage dashboards. Create sales enablement materials and align compensation with contribution margin.

Weeks 11–13: Launch to a cohort. Monitor the four key performance indicators. Establish a monthly pricing governance process with Product, Finance, Sales, and Customer Success. Disciplined implementation typically results in 15–25% gross profit increases, over 20% reduction in churn, and LTV:CAC ratios of 9:1 to 11:1.

Contracting and Billing Considerations:

Enterprise deals and minimum-commitment structures protect your revenue floor and provide customers with cost certainty. True-ups reconcile actual usage with committed volumes, while prepaid credit packages offer a balanced approach. AI-specific contract terms should address model availability SLAs, data retention policies, permitted use cases, and disclaimers regarding output accuracy. Detailed invoices that separate platform fees from usage charges and show included allocations versus overages help reduce billing disputes and enhance transparency.

FAQ

How Much Does AI Software Cost?

Enterprise AI tools range from $20–30/user/month for bundled features (Microsoft Copilot, Salesforce Einstein) to thousands per month for usage-intensive platforms. Global enterprise AI spending is projected to exceed $250 billion in 2026 (Gartner). Your specific costs depend on model choices, usage volume, and whether you build or buy.

What Are the 4 Types of AI Software?

Conversational AI (chatbots, assistants), analytical AI (predictive models, BI), generative AI (content creation, code generation), and autonomous AI (agents, process automation). Each carries different cost structures and pricing implications.

How Much Is the Price of AI?

For end users, AI pricing spans from free tiers through $20–30/month individual plans to enterprise contracts at $50K–500K+ annually. For companies building AI into their products, the relevant cost is inference: currently $0.10–$15 per million tokens, depending on the model’s capabilities. The worked example above shows how to model these costs to arrive at a defensible price point.

What Is the AI Pricing System?

The combination of pricing model, packaging architecture, metering infrastructure, and governance process determines how you monetize AI capabilities. The absence of any component — particularly governance — produces margin-blind pricing.

Can I Build My Own AI for Free?

You can prototype using free-tier APIs and open-source models. But “free” is misleading at the production scale — infrastructure, engineering time, monitoring, and support add up. Most teams that start with “we’ll build it ourselves for free” discover per-query costs of $0.02–$0.10 once they account for all inputs.

Why Do 85% of AI Projects Fail?

Most failures trace to misalignment between the technical solution and the business problem. Pricing contributes when it creates adoption barriers or usage anxiety. Sound pricing architecture — with included allocations, clear guardrails, and predictable metrics — directly reduces the adoption friction that sinks AI initiatives.

When to Get Help: Signs Your AI Pricing Needs a Reset

Margin-blind pricing is rarely obvious. It manifests through operational symptoms that may appear manageable individually but collectively indicate a structural issue.

Margin compression despite revenue growth: If revenue increases but gross margin percentage declines, especially among high-usage customers, the pricing architecture is likely the cause. A drop of more than 3 points in overall gross margin, alongside increased AI adoption, signals this issue.

Customers surprised by bills or churning after overages: If your customer success team frequently explains invoices, your usage metric or guardrail design requires review.

Sales cycles stalling due to pricing complexity: If prospects require multiple pricing discussions or win/loss analysis identifies ‘pricing confusion’ as a top reason, your packaging may be too complex for customers to evaluate effectively.

Usage growth not translating into revenue: If AI feature adoption is strong but revenue growth lags, this indicates that your pricing metric, packaging structure, or overage policy needs adjustment.

Diagnostic Checklist and Next Steps

Before your next pricing review, consider these five questions: Do you know your per-unit AI cost at the customer level? Does your price metric align with something your customer already tracks? Is sales compensation aligned with the AI-component margin? Do you have a pricing governance process? Can you explain your packaging structure based on your own cost and value data, rather than a competitor’s pricing page?

If resources are limited, begin with three actions: track AI feature usage at the per-customer level, interview 15–20 customers about perceived value and cost expectations, and explicitly model AI cost of goods sold for your top-decile customers. These inputs provide sufficient data to make an informed initial pricing decision.

If these challenges sound familiar, Revology Analytics can conduct a focused AI pricing diagnostic to identify margin leakage, determine the responsible metric, and recommend changes for the next 90 days.